Who Is Liable When Crypto Keys Are Lost? A Legal Framework for Custodial, Self-Hosted, and Hybrid MPC Models

Phoebe Duong

Author

Two Indian exchanges were hacked in the same month, one year apart. One spent fifteen months in restructuring proceedings. The other cleared all user withdrawals within twenty-four hours. The attacks were different. The legal outcomes were not determined by the attacks.

Quick takeaways (TL;DR)

- When crypto keys are lost or stolen, who bears liability depends on who controls the keys, not who suffered the loss.

- Custodial SaaS vendors routinely cap their liability at fees paid, not asset value. Your users have no contract with the vendor. That gap falls on you.

- Self-hosted MPC shifts liability directly to the operator but also gives that operator the technical evidence to prove key integrity to regulators and courts. This advantage only materialises when the infrastructure is run to proper production standards.

- Multisig and hybrid models create dangerous liability ambiguity: when multiple parties share signing authority, responsibility for failures becomes contested and prolonged.

- The core legal question is not "can we prevent a hack?" It is "can we prove who controlled what, and when?"

What determined those outcomes, and what your legal team needs to map before the next incident, is the subject of this article.

The Question Regulators Will Ask First

When a crypto incident occurs, a regulator's first question is rarely about the amount lost. It is about control.

"Who had access to the private keys at the time of the incident? And can you prove it?"

This question determines everything downstream: whether your company bears direct liability to users, whether your vendor agreement holds up, whether your user terms are defensible, and whether you can produce the audit trail a court or regulator needs.

The July 14, 2025 joint statement by the Fed, OCC, and FDIC addresses risk-management considerations for banking organizations providing crypto-asset safekeeping. It reaffirms that existing risk management principles apply, and that using third-party vendors or sub-custodians does not reduce a firm's own responsibility for sound controls. This is a clarification of existing expectations applied to a new asset class, not new law, but it signals clearly which direction regulatory scrutiny is moving.

MAS guidelines on digital payment token services in Singapore carry equivalent expectations around asset segregation and third-party oversight. MiCA, which came into full effect across the EU in 2025, imposes liability on CASPs (Crypto-Asset Service Providers) for losses resulting from operational failures, with specific provisions around safekeeping obligations and third-party arrangements.

The pattern is consistent across jurisdictions: the regulated entity is accountable. The vendor contract is an internal matter that regulators will not accept as a defence.

WazirX and CoinDCX: What These Cases Actually Show

WazirX and CoinDCX are two examples that illustrate how asset segregation and incident response speed shape legal and reputational outcomes. The comparison is useful, but the lesson is not about which custody model is architecturally superior.

WazirX (July 2024, ~$235M) involved a multisig wallet. The wallet used a Gnosis Safe setup requiring four of six signatures to authorise transactions, with five keys held by WazirX and one by Liminal Custody, a Singapore-based custody partner. Attackers, later linked to North Korea's Lazarus Group, compromised multiple signing devices and used a manipulated UI to trick signers into authorising a malicious contract upgrade, draining roughly 45% of total customer assets. What followed was a prolonged public dispute: WazirX and Liminal blamed each other, forensic reports were contested, and Indian authorities alleged Liminal failed to provide critical logs needed for the investigation. The High Court of Singapore sanctioned a restructuring scheme in October 2025. Users recovered approximately 85% of funds after more than a year of proceedings.

CoinDCX (July 2025, ~$44M) was a different kind of incident and a different kind of outcome. Hackers used malware delivered via a fake job offer to compromise an employee's laptop, then drained an operational hot wallet used for liquidity provisioning on a partner exchange. User funds were held in cold storage and were never at risk, not because of a particular custody model, but because CoinDCX maintained clear segregation between operational funds and user assets. The company absorbed the $44M loss from its corporate treasury, processed all withdrawal requests within 24 hours, and communicated publicly within hours of the breach.

The outcome difference between the two cases was about two things: whether user assets were clearly separated from operational funds, and whether the company had the capacity and preparation to respond without delay.

| WazirX (July 2024) | CoinDCX (July 2025) | |

|---|---|---|

| Amount lost | ~$230M | ~$44M |

| What was compromised | Multisig wallet via UI manipulation and device compromise | Operational hot wallet via employee malware |

| Setup | Gnosis Safe multisig, 4-of-6 signatures, co-signed with Liminal Custody | Internal operational wallet, separate from user cold storage |

| User funds affected | Yes, ~45% of total customer assets | No, cold storage segregated from operational funds |

| Responsibility dispute | Extended, WazirX and Liminal publicly blamed each other, logs contested | None, company absorbed loss directly |

| Legal outcome | Singapore restructuring, ~85% recovery after 15+ months | Full user compensation within 24 hours, no proceedings |

| Primary lesson | Shared signing without clear log ownership created prolonged liability dispute | Clear asset segregation and rapid response limited legal exposure |

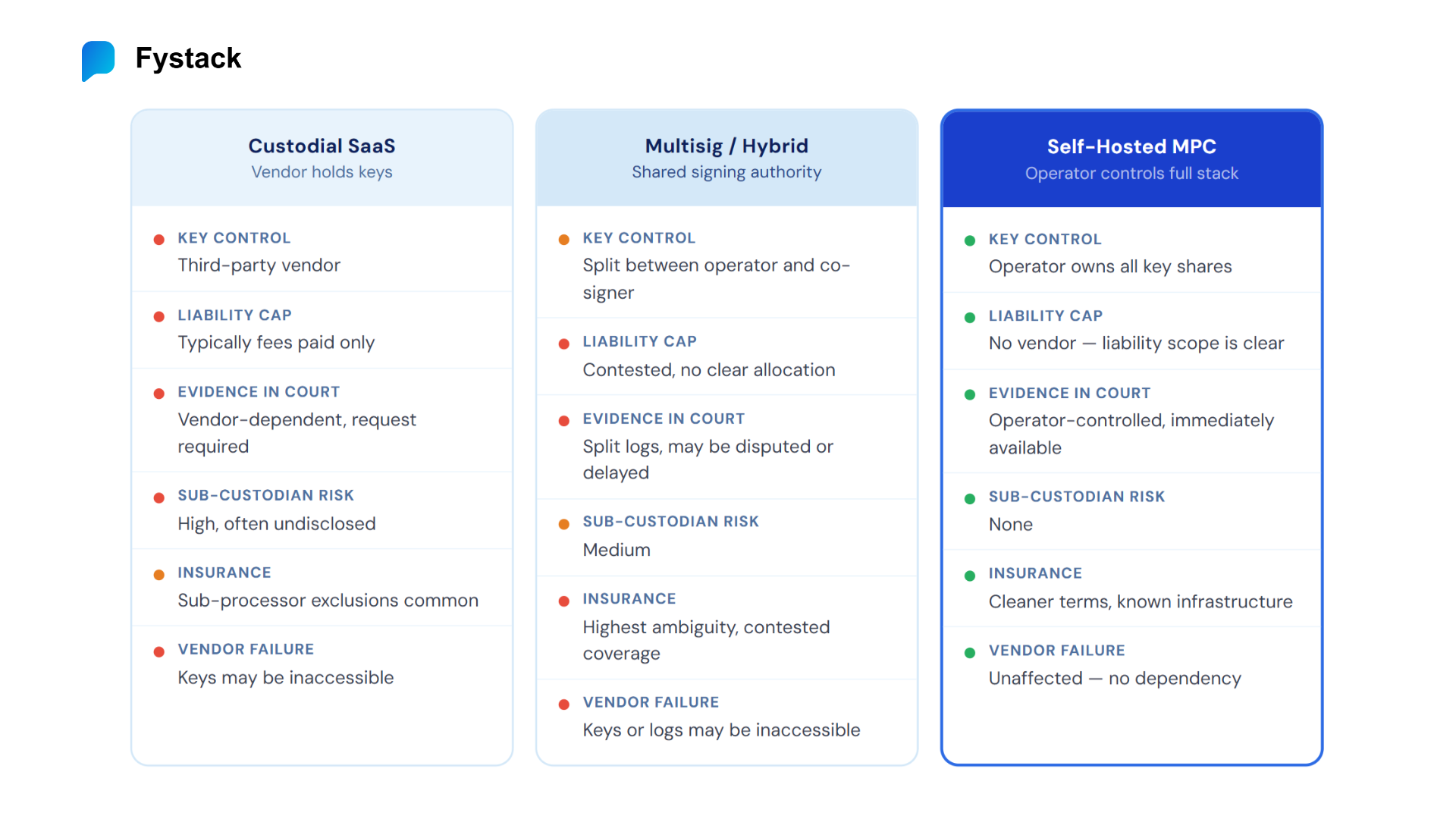

Three Custody Models and the Liability Each One Creates

Before your legal team can draft defensible user terms or respond to a regulatory inquiry, you need a clear map of which custody model you operate under and what liability that model assigns to each party.

Custodial SaaS: Vendor Holds Keys, But Disclaims Liability

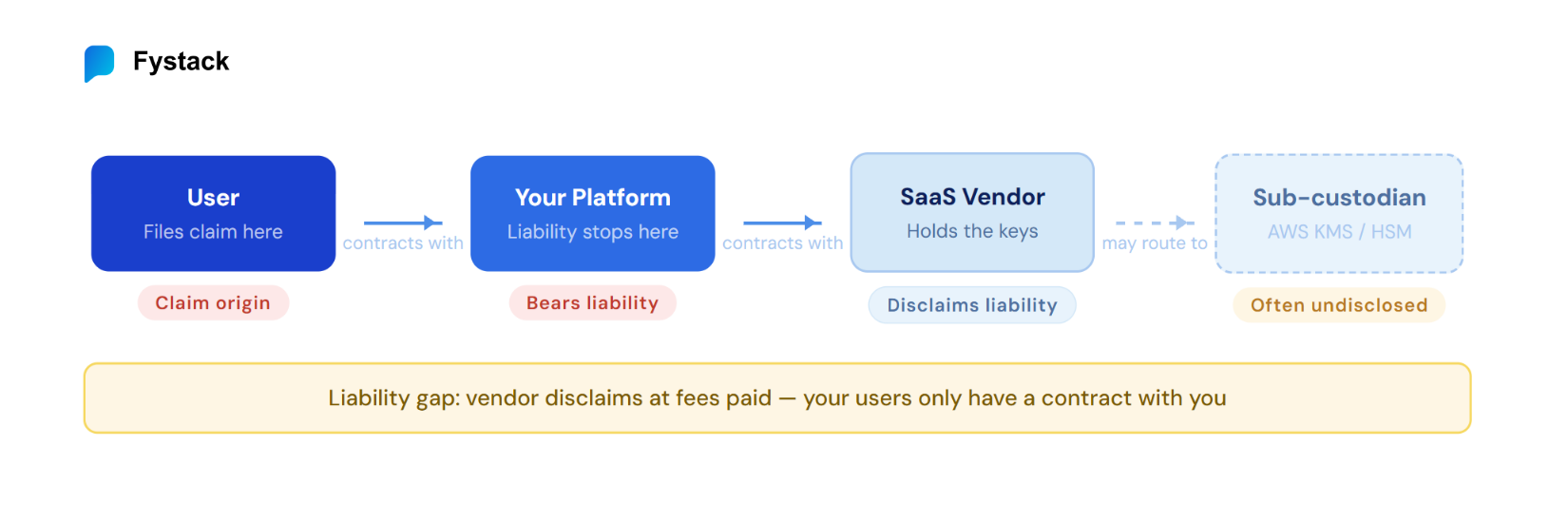

In a custodial SaaS model, your company contracts with a third-party custody vendor who manages key infrastructure via API. Your users interact with your platform. Your platform talks to the vendor. The vendor holds the keys.

The legal gap most in-house counsel miss: your users have no contractual relationship with the vendor. When something goes wrong, users come to you. When you go to the vendor, their terms of service typically disclaim liability for losses beyond the fees paid.

A 2025 empirical study of crypto custody terms by Zetzsche and Nikolakopoulou, published in the Journal of Financial Regulation (Oxford University Press, vol. 11, pp. 73-97), found that 50% of custodians in the sample cap their liability at the total amount of fees paid by the client, while 11% cap it at a fixed monetary limit. Fewer than half offered terms that provided meaningful protection relative to asset value. For an exchange processing tens of millions in monthly volume, a fees-based cap represents a fraction of a percent of actual user exposure.

The liability chain in a SaaS custody model:

User claims against your platform > Your platform claims against vendor > Vendor liability disclaimed at fees paid

This does not make SaaS custody legally indefensible. But it means your user terms, incident response agreements, and insurance arrangements must fill a gap that your vendor contract does not.

What legal needs to verify before signing a SaaS custody agreement:

| Checkpoint | Red Flag | Better Position |

|---|---|---|

| Liability cap clause | Capped at fees paid in prior 12 months | Capped at a percentage of assets under custody |

| Sub-custodian disclosure | Vendor may use sub-processors at its discretion | Named sub-processors with operator right to object |

| Key return on termination | Silent or "at vendor's discretion" | Explicit key export protocol within a defined timeframe |

| Incident notification SLA | 72 hours or longer | 24 hours or less, with interim updates |

| Audit rights | Vendor may provide SOC 2 report on request | Right to conduct independent audits |

| Insurance applicability | Policy covers vendor's infrastructure only | Policy explicitly extends to operator's user exposure |

Self-Hosted MPC: Direct Control, Direct Liability, Direct Evidence

In a self-hosted MPC model, the company deploys and operates its own MPC node infrastructure within its own environment. Private key shares are distributed across nodes the company controls entirely. No third-party vendor touches the key material during normal operations.

MPC stands for Multi-Party Computation, a cryptographic method where private key shares are held by multiple independent nodes so no single party ever holds the complete key. In a self-hosted deployment, all of those nodes sit within the operator's own infrastructure.

The company is directly liable to users for key-related losses. There is no vendor to point to. That is the real trade-off, and worth stating plainly.

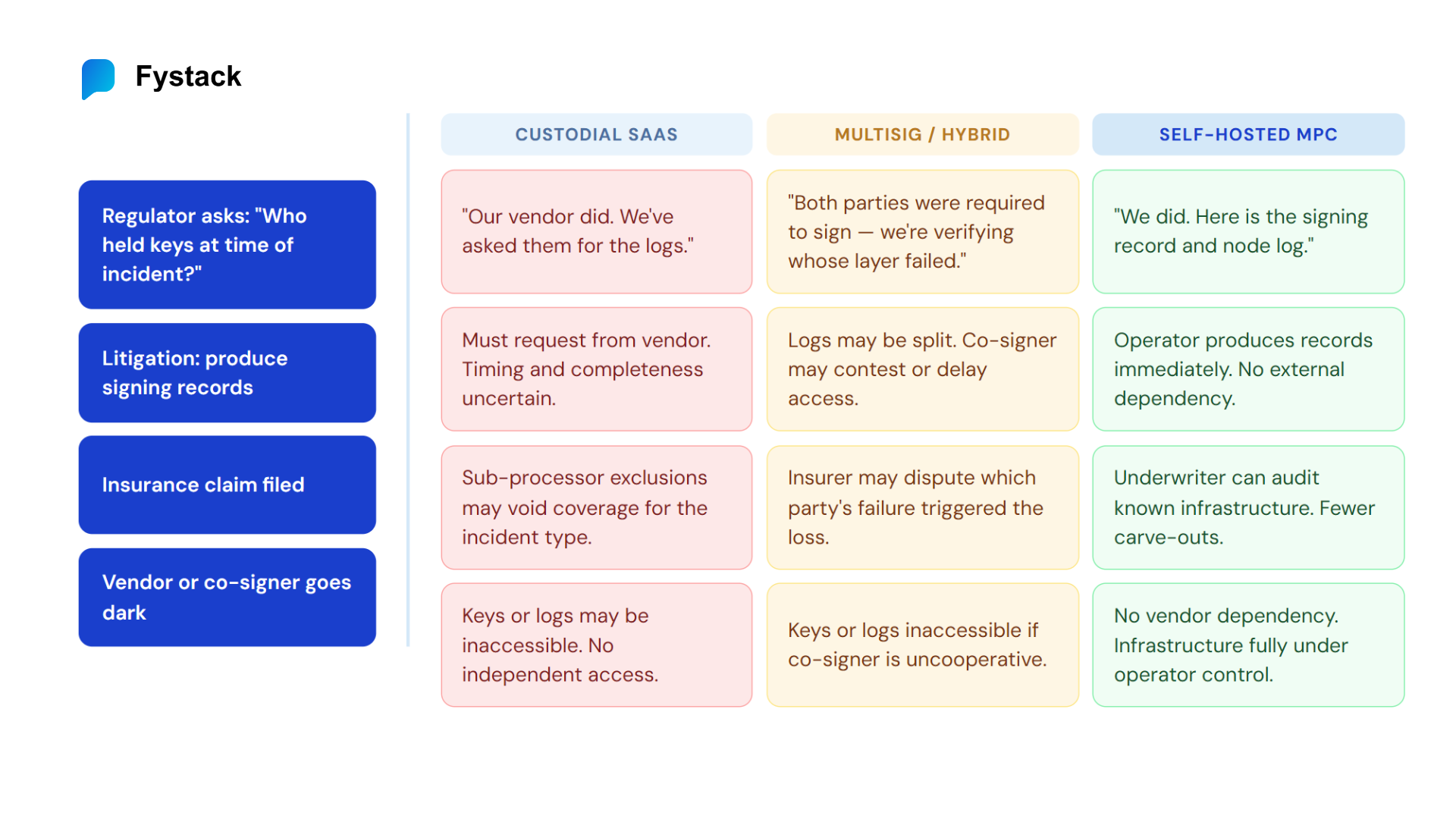

What self-hosted MPC provides that a vendor-dependent model cannot is evidentiary control: when a regulator or court asks who controlled the keys, a self-hosted operator can answer with its own technical logs, signing records, and cryptographic evidence, rather than relying on whatever a vendor chooses to provide or how quickly they respond.

Why this matters for duty of care

In most jurisdictions, a firm can significantly reduce regulatory penalties after an incident if it can demonstrate it implemented reasonable and documented controls. Under MiCA, MAS guidelines, and the Fed/OCC/FDIC July 2025 joint statement, demonstrating duty of care means showing that key generation, storage, and access were governed by verifiable, auditable processes. Self-hosted infrastructure makes that proof independently available. Vendor-dependent infrastructure makes it contingent on the vendor's cooperation.

An insurance implication worth noting: when key infrastructure is fully operator-controlled, insurance underwriters can audit the actual controls in place rather than relying on a vendor's policy documents. Policies for SaaS-dependent custody often include carve-outs for acts of the sub-processor that can limit coverage in precisely the scenarios where it is most needed.

| Liability Dimension | Self-Hosted MPC Position |

|---|---|

| Who controls keys | The operating company |

| Liability to users | Direct, but clearly scoped |

| Duty of care evidence | Full audit trail under company control |

| Vendor dependency in litigation | None |

| Regulatory audit path | Direct inspection of operator infrastructure |

| Insurance | Potentially cleaner terms, fewer exclusion carve-outs |

| Key access on vendor failure | Unaffected, no vendor dependency |

Multisig and Hybrid Models: Where Liability Becomes Contested

In a multisig or hybrid arrangement, signing authority is shared between the company and one or more external parties. Neither party can sign alone. Both are required for a transaction to execute.

Technically, this is designed to reduce single-party risk. Legally, it creates a liability gap: when an incident occurs, no single party had full control, so no single party is clearly responsible. Your users still have a claim against you, not the co-signer.

The WazirX case is the clearest recent example. The dispute between WazirX and Liminal was not purely about who caused the hack. It was fundamentally about who could prove what, and whose logs were credible and complete. Without a clear, undisputed record of what happened at the signing layer, the dispute was prolonged and the outcome uncertain for over a year.

If your organisation currently operates under a multisig or hybrid arrangement, two contractual provisions are urgent:

First, explicit loss allocation. The agreement with your co-signer or custody partner should define in plain terms which party bears liability if a signing event results in a loss, and under what conditions each party's indemnification obligations are triggered.

Second, independent log access rights. The agreement should guarantee your unconditional right to retrieve all signing logs independently and in real time for any regulatory or litigation purpose. If you cannot retrieve logs without the other party's cooperation, you are in a position analogous to WazirX after the incident.

Liability scenarios in multisig and hybrid models:

| Incident Scenario | Who Gets Sued | Who Pays | Likely Duration |

|---|---|---|---|

| Co-signer infrastructure implicated | Operating company (user-facing) | Contested | Protracted dispute |

| Operator infrastructure implicated | Operating company | Operating company | Clearer, but complex |

| Attacker manipulates signing process (UI attack, device compromise) | Operating company | Contested, who failed first? | Litigation-heavy |

| Co-signer goes insolvent or uncooperative | Operating company | Operating company | Clear, you absorb it |

What Legal Needs to Audit Right Now

Regardless of which custody model your company operates under, three areas require immediate legal review, before an incident rather than during one.

Your Vendor or Co-Signer Agreement

Pull the existing agreement and locate these provisions specifically:

- Liability cap clause: Is it capped at fees paid, or at a meaningful percentage of assets under custody?

- Sub-custodian rights: Can the vendor use sub-processors, including cloud KMS providers or HSM vendors, at its discretion, or must it disclose and obtain consent?

- Key return on termination: Is there an explicit, time-bound handover protocol if the relationship ends?

- Incident notification SLA: What is the maximum notification window? Is there an obligation to provide interim updates?

- Audit rights: Do you have the right to conduct independent security audits, or only to receive the vendor's own reports?

- Signing log access: Is your right to retrieve signing logs for regulatory or litigation purposes documented, unconditional, and time-bound?

One broader note: a self-hosted model gives better control over the audit trail and evidentiary position, but it shifts full operational responsibility to your team. Running MPC infrastructure at production level requires proper isolation, high availability, continuous monitoring, immutable logs, and formal key management procedures. That operational capability is the prerequisite, not just the architectural choice.

Your User Agreement

Your users contracted with you. Your user-facing terms must address the following with precision, because vague or absent provisions will be construed against you in any dispute.

Custody model disclosure. Are you holding keys directly, through a third party, or via a shared signing arrangement? Users have a right to know. Under MiCA, CASPs are required to disclose their custody arrangements. MAS guidelines for digital payment token services carry equivalent transparency expectations. Even in jurisdictions without explicit requirements, non-disclosure creates reputational and regulatory risk when things go wrong.

Liability limits. If your vendor caps their liability to you at fees paid, and you pass a similar cap to users, that cap may not be enforceable in consumer-facing jurisdictions. Local consumer protection law often overrides contractual liability limitations. Get jurisdiction-specific legal advice on whether your user agreement's liability cap would survive a challenge.

Incident response commitments. What do you owe users in terms of notification timing, remediation steps, and compensation? CoinDCX communicated publicly within hours and processed all withdrawals before the next business day. That was not legally required, but it shaped the regulatory and public response. Proactive disclosure is increasingly the expected standard, not the generous one.

Your Incident Response Protocol

The CoinDCX incident demonstrated that speed of documented response matters legally, not just operationally. The company was communicating clearly about what was and was not affected within hours of the breach becoming public. That speed is only possible if the protocol is defined in advance.

Your incident response protocol should define, in writing:

- Declaration authority: Who has the authority to declare an incident, and at what threshold?

- User notification: Who communicates to users, via what channel, and within what timeframe? Does this differ by jurisdiction?

- Regulatory notification: Which regulators must be notified, within what window, and with what initial disclosure? Cross-border operators face different timelines under MiCA (72 hours for significant incidents), MAS, and FCA frameworks.

- Evidence preservation: Who produces the technical evidence log for potential litigation, and under what chain of custody?

- Vendor or co-signer engagement: If a third party is involved in key management, what are their contractual obligations to support your investigation? Can you retrieve their logs independently, or do you need their cooperation?

If the answer to any of these is "we would figure it out at the time," that is a documented liability risk, and the kind of gap regulators identify in post-incident reviews.

Self-Hosted MPC as a Liability Proof Mechanism

Self-hosted MPC does not prevent hacks. CoinDCX had strong cold storage architecture and still lost $44M from an operational hot wallet. No custody model eliminates operational risk.

What self-hosted MPC provides is more specific: the ability to answer "who controlled the keys" with evidence that the operator itself produces and controls, not evidence that must be requested from or verified by a third party.

When a regulator, auditor, or plaintiff's attorney asks that question, the difference between "our vendor's logs show X, and we have asked them to provide a copy" and "our infrastructure logs show X, here is the signed record" is, in practice, the difference between a defensible position and a contested one.

Self-hosted MPC solutions, including open-source projects such as Mpcium, allow operators to own signing records and audit trails entirely within their own infrastructure. Compared to relying on a vendor statement or third-party logs that may be unavailable, delayed, or disputed, this provides a material advantage in regulatory and litigation contexts.

That advantage is conditional. It only materialises when the infrastructure is deployed and operated to production standards: proper node isolation, continuous monitoring, immutable logs, formal key management procedures, and documented access controls. A self-hosted deployment that lacks these operational practices offers the liability of direct control without the evidentiary benefit.

Legal position comparison across custody models:

| Legal Scenario | Custodial SaaS | Multisig / Hybrid | Self-Hosted MPC |

|---|---|---|---|

| Regulatory audit of key controls | Dependent on vendor cooperation | Split between parties, may be contested | Full audit trail under company control |

| Litigation over unauthorized transaction | Vendor logs, vendor controls access and timing | Contested, logs may be split or unavailable | Company logs, company controls access |

| Insurance claim after incident | Sub-processor exclusions may apply | Highest ambiguity, contested coverage | Cleaner claim, known and auditable infrastructure |

| Regulator asks who held keys at time of incident | "Our vendor did, per contract" | "Both parties were required to sign" | "We did, here is the signing record" |

| Co-signer or vendor becomes uncooperative | Keys or logs may be inaccessible | Keys or logs may be inaccessible | No dependency |

Summary: Liability Framework by Model

Key Actions for Legal Teams

1. Audit your vendor or co-signer agreement today.

| Dimension | Custodial SaaS | Multisig / Hybrid | Self-Hosted MPC |

|---|---|---|---|

| Who controls keys | Vendor | Shared | Operator |

| User liability falls on | Operator (vendor disclaims) | Operator (scope ambiguous) | Operator (scope clear) |

| Vendor liability cap | Typically fees paid only | Partial, contested | None, no vendor |

| Evidence control in litigation | Vendor-dependent | Split, often contested | Operator-controlled |

| Duty of care documentation | Partial | Contested | Full audit trail (if production-grade) |

| Regulatory audit path | Through vendor | Through vendor and operator | Direct to operator |

| Sub-custodian risk | High, often undisclosed | Medium | None |

| Insurance | Complex exclusions common | Highest ambiguity | Potentially cleaner terms |

| Key access on co-signer or vendor failure | At risk | At risk | Unaffected |

Locate the liability cap clause, sub-custodian disclosure rights, and key return protocol on termination. These three provisions define your worst-case legal exposure.

2. Review your user terms for the liability gap.

Your users contracted with you, not your vendor. If your vendor disclaims liability at fees paid and your user terms mirror that cap, test whether that cap is enforceable in each jurisdiction where you operate.

3. Document your incident response protocol before an incident.

Define who declares, who communicates, who preserves evidence, and who engages regulators. CoinDCX's outcome was not luck. It was clear asset segregation and preparation that made rapid response possible.

4. Understand what duty of care requires in your jurisdiction.

Under MiCA, MAS guidelines, and the Fed/OCC/FDIC July 2025 joint statement, demonstrating duty of care requires a verifiable and auditable record of key controls. That record is only as reliable as whoever controls it.

5. Assess your insurance structure against your actual custody architecture.

Vendor-dependent custody often carries sub-processor exclusion clauses that reduce coverage at the worst moment. Self-hosted operators with documented operational controls can negotiate from a clearer position.

6. If you operate multisig or hybrid, get explicit loss allocation in writing.

A shared-signing arrangement without contractual clarity on liability allocation is an unresolved legal risk. The WazirX dispute is the reference case for what happens without it.

Fystack provides self-hosted MPC infrastructure for fintech operators, PSPs, and stablecoin platforms that need direct control over key material and the audit trail that comes with it. Technical documentation, including Mpcium's open-source distributed MPC cluster, is available at docs.fystack.io.

Have questions about your custody setup? Share what you are building via the form

Not ready yet? Join our Telegram for product updates and architecture discussions: https://t.me/+9AtC0z8sS79iZjFl

Frequently Asked Questions (FAQs)

Does self-hosted MPC prevent hacks?

No. CoinDCX lost $44M despite strong cold storage architecture. No custody model eliminates operational risk. What self-hosted MPC provides is evidentiary control - the ability to prove who controlled the keys with records your own infrastructure produces, not records you must request from a third party.

Why is custodial SaaS legally risky?

Because vendors typically cap their liability at fees paid - a fraction of actual asset value. Your users have no contract with the vendor. When something goes wrong, users sue you, you go to the vendor, and the vendor disclaims liability under their terms. That gap falls entirely on the operator.

What is the core legal problem with multisig?

Shared signing authority creates contested liability. When an incident occurs, no single party had full control, so no single party is clearly responsible. The WazirX case is the reference point: a 15-month dispute where WazirX and Liminal publicly blamed each other, logs were contested, and users waited over a year for resolution.

What does "duty of care" mean in practice for custody operators?

Under MiCA, MAS guidelines, and the Fed/OCC/FDIC July 2025 joint statement, demonstrating duty of care means producing a verifiable, auditable record of key controls. Self-hosted infrastructure makes that proof independently available. Vendor-dependent infrastructure makes it contingent on the vendor's cooperation, which may not come quickly or at all.